GitHub Copilot and Other Programming Doom

- Artificial Stupidity with GitHub Copilot from Nov 3, 2021, 6:39am

As I’ve hinted at once or twice, I’m recycling some answers I have written on Quora—and some other writing—and updating them for my current line of thinking.

This post is partly based on…

- Is there any artificial intelligence that can take code in one programming language and optimize it into a number of other programming languages?, which I originally answered on Thursday, July 3rd, 2014.

- Will computer programming be automated in the near future by Artificial Intelligence?, which I originally answered on Saturday, July 12th, 2014.

- Is it true that 80% of IT jobs can be replaced by automation? What does that mean for software developers?, which I originally answered on Sunday, March 26th, 2017.

- Will artificial intelligence ever be good enough to translate legal contracts into language people can understand?, which I originally answered on Saturday, July 14th, 2018.

Obviously, I have edited them substantially to fit together, and to better fit the tone and format of Entropy Arbitrage.

Background

People have described programming on systems like the Colossus computer as resembling instructing the machine to make specific calculations/counts, and after studying the results, providing the next job. Computers of the day didn’t remember intermediate results, and didn’t have the means to act on any intermediate results it had.

This process included—in addition to many technicians and engineers standing around to make repairs—hand-punching paper tape loops and specifying every single instruction that the machine needed to execute to solve the problem at hand.

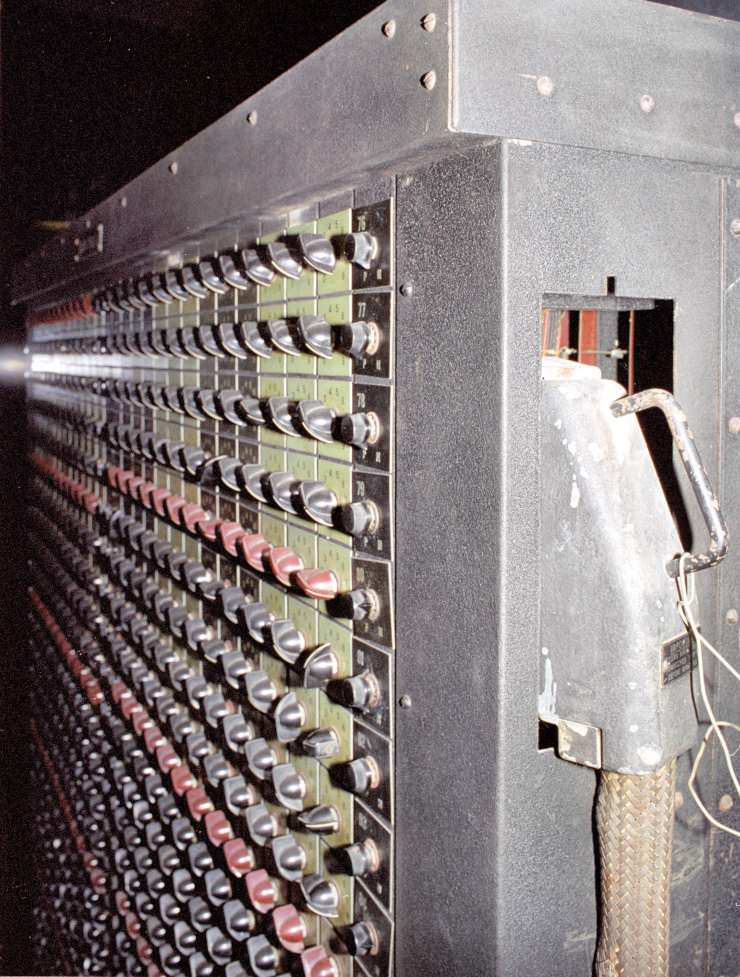

In some ways, the ENIAC was even stranger, basically constructed as a fixed collection of complex problem-solvers that more or less acted as single instructions in a process. Programmers—mostly women, by the way, though we’ve quietly ignored that detail for decades—specified the instructions by setting switches on panels, such as the panel you can see in the post’s header image.

Advances

Today, though? I can write some fairly high-level descriptions of what I want—for some cases, I can even just specify the problem and trust that someone else has solved the problem for everybody—with an editor that will catch a lot of my mistakes for me and make suggestions to improve what I write, probably leveraging frameworks generating a lot of the most boring code and figuring out what libraries I’ll likely need to get things to work, compile it in seconds without me needing to know anything about the underlying computer, then send the whole thing out to a dynamically-scaled cluster of computers.

As technology advances, the question consistently comes up: Is this the end of programming? With such sophisticated tools, can’t managers or even uneducated customers can just use those tools directly and cut out the programmers?

Prior Ends of the World

While by no means complete—because not everybody announces every project, and because I don’t want to weigh the post down—I’d like to talk a bit about the various times in the past that we would definitely no longer need programmers.

COBOL

The cheap shot aims at COBOL, the “COmmon Business-Oriented Language.” While the underlying intent of developing the language intended to commoditize computer hardware by making it easier to move software between mainframes, you’ll occasionally stumble across a contemporary (non-humor) book or article suggesting that, because COBOL’s syntax looks like English, managers will soon have the ability to eliminate their developer teams and do the programming themselves, since the managers already know English, and so don’t need the programmers to “translate” for them.

The industry has probably complicated that sentiment, by the fact that most software project managers spent (or still spend) time as developers, but the same antipathy and self-hatred still exists towards “management,” as somehow incapable of programming. It only makes the situation worse, when executives use “wives” as the low-water mark for technical ability.

Having used COBOL on a couple of projects, I can tell you that…it’s fine. I hate the idea of breaking up the program into sections—the Identification, Environment, Configuration, Data, and Procedure divisions—but I started out programming in BASIC, so I don’t worry about the occasional GOTO in my code when I see no other option. And COBOL feels fairly similar to other period languages, once you get used to the syntax.

Diagrams

My kindergarten teacher taught us to read and draw flowcharts, bizarre as that might sound. I never learned why anybody put it in the curriculum, and I’ve never thought to ask students in other classes if they had the same experience, on the rare cases that I run across someone from those days—since I generally prefer to not sound like I might have a nervous breakdown when I get excited to see someone—but we also wired up telephones to a couple of car batteries for one lecture. I know that they didn’t designate it as an “advanced” kindergarten class (we didn’t have those, back then, to my knowledge) or a wealthy school district. Maybe I just had a great teacher who I didn’t appreciate enough.

Digression: For those of you who come from younger cohorts or feel less technically inclined, the phone company previously powered what we now call “landlines” over the phone cables themselves, separate from the electric service. If the phone had 48 volts, you could place a call. In most cases, this meant that neighborhoods or houses without electricity still had phone service. It also meant that, in certain cases, if the phone company’s local office also lost power, you could supply power to the phone—though the company wouldn’t appreciate your ingenuity if they found out—and could sometimes connect to someone if the local switch still worked. You could also use the electricity from the phone line to power small devices, and pull other tricks that gave rise to the phreaking community. In essence, my kindergarten teacher showed us how to build a basic intercom system, by connecting the phones together with power.

Anyway, you can probably see where this is going. Because a flowchart has no fussy syntax rules—well, OK, it actually does, but you don’t type them—even a child can do it, and therefore we don’t really need any programmers, if we only had the technology to draw flowcharts on the screen.

You know what I love (in an ironic way) about this industry, though? “Any fool can write code with flowcharts” obviously flopped, and today, most developers I know would laugh if you started drawing a flowchart to explain something. But Blockly and similar systems in the last decade or so, basically just expensive versions of the same idea, still show up regularly as brilliant new ideas. Nothing in the software industry ever goes away permanently…

In between flowcharts and Blockly, we should also mention UML, which…it’s a flow chart for data, instead of code. Let’s leave it at that, because we collectively spent about ten years when every office I worked announced that they planned to require every task to include submitting an updated UML diagram, but never actually followed through. If you remember those days, that probably feels like more than enough talk about UML for you, too.

Computer Aided Software Engineering

This feels like a funny category, to me, because parts of the idea exploded and changed the industry for the better, whereas others never manifested. Computer-Aided Software Engineering suggested that we could integrate important tools so tightly into the development process, that developers could afford to come far less experienced and far less talented—and cheaper, of course—because the tools would catch issues before anybody else saw them.

In some cases, this probably worked out. For example, distributed revision control means that developers can keep track of their work in the public repository, without contaminating anybody else’s work, until the time comes to merge the pieces together. Automatic code analysis—“linting”—has saved everybody the embarrassment of revealing certain kinds of mistakes.

Other cases never worked well enough for anybody to release them. I remember seeing demonstrations that would generate code from diagrams—like from the last section—but that never manifested. Several people at the time also told me that multiple companies stood just months away from releasing penetration testing systems that could operate fully automated, able to assimilate results and automatically generate new hypotheses about flaws, with no human intervention. But decades later, penetration testing still takes manual and expensive labor.

And then we had cases where we technically got what developers promised, but the promises had an overblown aspect to them. For example, we built computer science education, for a long time, around proving program correctness; some classes in my college taught programming with tedious documentation requirements about the expectations of various parts of the program, not for human readability, but for the benefit of a hypothetical code verification system. To my knowledge, that still fits the domain of hiring teams of mathematicians to review critical code, rather than something that companies would do to save time. Similarly, the CORBA advocates promised a future where we—anyone—would have the ability to stitch together features that we wanted from many vendors across the Internet, only needing to write original code, but we never got anything like the promised flexibility or ubiquity.

No-Code or Low-Code

Going back at least as far as FileMaker Pro, we have had systems meant to take the “coding” out of programming available, hoping to make the system accessible to average people. Generally, the product gives you a database with an example-based approach to requesting data, a form builder, and the ability to trigger events when certain things happen.

The general idea suggests that, as long as the application that you want happens to look like what the developers planned for, you probably won’t have much work to do. In reality, while these systems have their advocates—I had a manager who still used FileMaker as late as 2001 for internal tools—the most important skills in using them involve understanding what the developers meant their no-code tool to do, and trying not to exceed those boundaries.

Artificial Intelligence

Things stayed quiet on this front, for a while, but then neural networks came knocking on the door. Now rebranded “machine learning,” we pretend that we’ve cracked artificial intelligence, even though the technique hasn’t changed since long before I took a neural networks class in 1995.

Because machine learning systems have—and I’ll get into this more in a bit—largely replicated every other technique we use for spoofing artificial intelligence, it has become the latest technology to claim to cut down the software development workforce, especially when it comes from one of the Big Five software companies—Amazon, Apple, Facebook, Google, and Microsoft—and so our latest contender enters the ring.

GitHub Copilot

Microsoft has now released GitHub Copilot, a machine-learning tool to generate code to supplement what a human developer has written.

I should note that I haven’t used it, yet. I put myself on the wait list and will probably write a post about the experience when I do. But before I get there, the product seems…problematic.

Update, 2021-11-03: Since writing this post, I have used Copilot and wrote about my experiences with it. You might already know that from a link at the top of the post.

Update, 2022-07-05: GitHub now charges for Copilot access, and Amazon has released their similar CodeWhisperer offering. The two announcements have led various software rights groups to recommend a boycott.

The Machine Learning Twist

The big issue seems that, as much as Microsoft/GitHub wants to call it a “code synthesizer, not a search engine,” it still admits that won’t prove true, with multiple variations on the following quote.

…the suggestion may contain some snippets that are verbatim from the training set.

This makes sense, of course. From the original press for AlphaGo playing Go, it has seemed obvious that machine learning generally just replicates a combination of Markov processes and alpha-beta pruning, even though it quickly becomes difficult to inspect those intermediate representations if nobody made them explicit parts of the output. That means that the software doesn’t “synthesize” anything. It regurgitates code in various-sized pieces, in such a way that the pieces all fit together seamlessly.

I would personally dare to guess that the candidates then get filtered by a normal “linter”—mentioned with the CASE tools—to make sure that none of the suggestions look like complete garbage. If you follow any actual experts in making neural networks do interesting things—people like author Janelle Shane, for example, rather than someone who wants to sell systems—you know that every list of fun things or a great story that a neural network generated involved generating hundreds or thousands of candidates, then manually picking the best; code has the advantage of having a rigorous grammar, but will still need filtering.

In any case, the regurgitation creates a problem, because we don’t know what projects the Copilot team has chosen in their training set. If the creators posted any of it without a public license, using results that include components would produce a derived work, and that would qualify piracy. If any of the pieces come from code released under the terms of the GPL, then you shouldn’t use it in a project that you didn’t released under the GPL. Even if they released all the training data under the terms of the MIT license, that has terms to comply with, too. That doesn’t even get into whether the training set includes code created in a jurisdiction with “moral rights” on works.

In other words, Microsoft and GitHub might want to convince everyone otherwise, but they have a fairly good chance that their project incites people to violate copyright law. And I’d bet that the licensing agreement to use the service doesn’t indemnify the developers who use Copilot, as a company might do if they could guarantee that they didn’t feed you protected code. More likely, the license disclaims all responsibility for vetting what it recommends to you.

If you think that seems like an overreaction, consider an obvious question: Have they ever mentioned training the model with their own code? Do you see some tiny chance that Copilot will suggest code copied verbatim from Windows, Office, Azure, or GitHub? If not, why not, if Copilot doesn’t create derived works and any licensing problems come from over-active imaginations…?

Keep in mind that, even if Copilot manages to somehow become the tool that magically understands human needs—ha!—if it does so through piracy, lawyers charge significantly more per hour than software engineers do.

Regardless, let’s assume that everything with Copilot operates aboveboard. We still have reasons to assume that it won’t reduce the need for developers.

Compilers Automate, Too

In the simplest interpretation, Copilot acts as a compiler. It looks at code that the developer wrote, and produces an error message, offering corrections.

It acts as a fancy compiler, sure, but if we strip away all the conceptual frills, it takes input code and outputs different code and some warnings. And improved compilers have only increased the market for programmers. Good tools make programmers more cost-effective, meaning that smaller budgets can afford to get things done.

Even in just the twenty-plus years that I’ve professionally programmed—to say nothing of the years that I spent as a hobbyist or student—the difference between how I worked then and how I work today looks like the difference between night and day. Specifically, between the time I start working on code and the end-user gets access to software, it would shock me, if ten percent of my work actually came from my efforts. The amount of automation sometimes seems shocking, when you actually stop to look at it, and it will only get better.

What might this mean? Probably more opportunity, if history serves as any indication. As the automated side of the job gains functionality, people have more ideas for what we can do with it. Not long ago, we never would have considered sending telephone calls and films across computer networks, but today, that has become the default situation.

However, the jobs will change, like they always do. Years ago, I used to work with people who had titles like “Build Engineer,” people whose actual job centered on configuring the compilers and the installation packages that we generated, to make sure that they did everything that the team needed. Today, we mostly see that as a solved problem, and developers almost exclusively work on translating user requirements into solutions. Those that haven’t, have further specialized into “DevOps engineers.” That trend will certainly continue.

Regardless of that trend, programming doesn’t seem likely to go away. No matter what the technology—COBOL, CASE tools, Copilot, whatever—promises that we’ll have “software without programming” always fall short, because someone needs to bridge the gap between translating what people say to the sort of specific, unambiguous explanation that maximizes the chances that people get what they want. And that person equates to a programmer, even the job looks like talking conversationally with some sort of artificial intelligence…which it won’t.

Writing Code versus Programming

For at least the thirty-ish years that I’ve paid attention to the software industry, but really going back as far as COBOL, companies have continuously promised a tool just over the horizon that will make it trivial for normal people to write code. Every one of them has achieved the goal, and became a failure, because writing code isn’t the hard part of programming. Turning ideas into an unambiguous specification makes the job hard, and that requires communication, not code.

I’ll repeat that, because it has made the rounds on Twitter , so I guess maybe that stumbled on something useful.

Writing code isn’t the hard part of programming. Turning ideas into an unambiguous specification makes the job hard, and that requires communication, not code.

Does that clarify why those prior systems haven’t reduced the number of programming jobs? All they do involves simplifying writing code, the easiest part of the job, the part that doesn’t help programmers grow.

Been There, Done That

Again, I haven’t looked at Copilot specifically, but I feel tempted to add a fourth item, something I’ve already alluded to.

Almost every OMG-we-finally-cracked-AI product just generates Markov chains, but without the ability to inspect and debug the generation process.

A Markov process basically acts like a special kind of search engine, that asks: Given the most recent inputs, what looks like the next most likely input? Therefore, while Microsoft might feel correct in saying that they didn’t write a search engine, the odds that they didn’t produce a search engine strike me as low.

I wouldn’t necessarily call that a bad thing. Modern development absolutely still requires a role for an editor or compiler that—for example—queues up relevant questions from Stack Overflow, so that the developer doesn’t need to hunt for them. But such a feature changes the speed of work, not the kind of work.

Making It Perfect

Let me propose a maybe-controversial assertion: The only way that artificial intelligence—or any product—might displace human software developers would require us to cede our autonomy to computers, in some dystopian future.

To clarify, maybe computers can—one day—produce all the specifics of code. But we only write software in response to a human need, and someone needs to communicate that need and what it means to a computer. Can the person with the need explain it directly?

Possibly, but then we’ll all become programmers, because that becomes the program. Again, this strikes at the problem with these “even your manager will have the ability to develop applications” products. They remove the typing from programming to bridge the gap from one direction, sure. But they never bother to teach people how to think about software or how to solve problems, so they never bridge the gap from the other direction.

Like I said, though, I can see one possible exception. If we feel willing to live in a world where software monitors everything we do and other software automatically gets written and rewritten to squeeze more productivity out of us based on some predefined metric, then it becomes possible for that to stand as the last program that a person will write. At that point, software no longer gets written to serve human needs; we all just watch our pomodoro clocks and shuffle tickets between swim-lanes to please our robot overlords 🤖.

However, I don’t see the utility in removing all discretion and self-direction from human beings. By and large, the historical societies that have attempted to do that have tended to fare poorly. In fact, I may write about this topic, occasionally.

The industry will shift, and it may shift dramatically, but it won’t go away unless we all decide to work for computers rather than have them doing work for us.

Bonus: Legalese

A related question to AI replacing programmers revolves around the idea of AI replacing lawyer, especially when dealing in contract law.

And this has a similar answer, too. People already write legal contracts in a language that non-lawyer people understand, after all, generally the native language of the people involved in the contract. The contracts grow long and complex to remove ambiguity—just like computer programs—and to prevent the relationship from falling apart due to a misunderstanding. They become complex, but they do not become (assuming nobody is trying to commit fraud) complicated. The complexity exists to handle some nuance that might become important.

Therefore, while the answer technically sounds a lot like “yes,” software could act as your contract lawyer in that it can just show you the contract, the answer that a reasonable person would want is “no,” because any simplification of the text reintroduces ambiguity to and potentially changes the meaning of the contract, so you wouldn’t actually understand the document that you want to sign, just an interpretation that overlooks a variety of details.

Robot lawyers, however, introduce an entirely new problem of introducing bias into the relationship. With code, we might not—though we should—care if the examples used to train our AI to sort names written by a person with no empathy. A list either presents itself in alphabetical order or it doesn’t, after all. But when we start talking about the law, medicine, or any other fields that revolve around deciding people’s fates, we have plenty of examples where machine learning has learned to treat Black people worse than everybody else, or that wealthy people should get what they want, because we have a long history of courts making those biased decisions to draw from. In those fields, handing them off to neural networks equates to depriving people of autonomy, which I covered ☝ back up there.

Preliminary Copilot Verdict

Since this post exists nominally to talk about GitHub Copilot and–again—I haven’t actually used it, I still feel comfortable in saying that it probably won’t change the world.

Given the issues, I want to give it a try for personal projects—where licensing won’t become an issue, since I release most things under GPL-variants, anyway—and might consider it for other open source projects. But it won’t save anybody any more money than UML saved companies in the ’90s.

If I get to the head of the waitlist, and it feels life-changing, though, you’ll all become the first to know…

Update, 2021-11-03: As mentioned above, since writing this post, I have used Copilot and wrote about my experiences with it.

Credits: The header image is ENIAC function table at Aberdeen by Jud McCranie, made available under the terms of the Creative Commons Attribution-Share Alike 3.0 Unported license.

No webmentions were found.

By commenting, you agree to follow the blog's Code of Conduct and that your comment is released under the same license as the rest of the blog. Or do you not like comments sections? Continue the conversation in the #entropy-arbitrage chatroom on Matrix…

Tags: quora programming career rant John Colagioia

John Colagioia